The 20-Agent Machine That’s Minting Millionaires

How 3 young creatives built a Claude Code powered script factory that made $10M+ for clients and what it shows about where AI is really headed

We’ve all seen the generic, robotic AI content flooding the internet.

But what happens when you stop treating AI like a chatbot and start treating it like a strict production line?

Recently, 3 young creators built a scriptwriting machine inside a simple terminal window.

Using a network of specialized AI agents, they’ve quietly powered some of the highest performing launch videos online, driving over $10M in client revenue.

They didn’t achieve this by finding a “magic prompt.”

Instead, they took their hard earned, real world experience in video production and hard coded those exact standards into an automated system. It operates like a digital assembly line: every AI agent has one specific job and nothing moves forward unless it passes a ruthless quality check.

This setup completely changes how we should be thinking about creative work. Here is a look under the hood at how this machine actually runs.

This issue is brought to you by DigitalOcean Deploy:

Building the model was the easy part. Running it in production is where the real engineering lives. Latency, throughput, cost per token, reliability at scale.

Deploy is DigitalOcean’s one-day conference for the inference era:

April 28, San Francisco. Free to attend.

Engineers and founders from Character.ai, vLLM, VAST Data, Arcee, and Workato on stage sharing their actual production stacks. AI Builder’s Mixer at 5pm.

Table of Contents

1. The Operators Behind the Machine

2. Why single prompt AI doesn’t work well for real tasks

3. Inside the Machine: How the System Actually Works

4. What the Results Actually Mean

5. The Real Lesson Isn’t About Agents

6. What Builders Should Take From This

1. The Operators Behind the Machine

What the three operators Mitchell Rusitzky, Matt Epstein and Alejandro Tamayo share is not an interest in AI.

It’s a shared standard for what high performing content actually looks like, shaped long before any of this system existed. They’ve worked in environments where output is judged by distribution, retention and conversion, not whether something “sounds good.”

That shows up immediately in how the system is designed. It doesn’t chase creativity in the abstract. It enforces patterns that already work.

Three Disciplines, One System

Individually, each of them brings a different layer of that discipline. Mitchell operates like a systems builder, translating production logic into something structured enough to run inside a multi agent environment.

Matt’s path from Cornell into building and scaling performance driven content that generated millions in revenue anchors the system in what actually converts, not just what gets attention. Alejandro comes from the YouTube ecosystem, where working alongside major creators sharpens instinct around pacing, hooks and audience retention in a way no prompt library can replicate.

Even the Emmy wins matter here, not as a credential, but as evidence of production judgment at a level where small quality differences compound.

What they built together reflects that shared background. The system doesn’t invent taste, it encodes it. That distinction carries more weight than it first appears.

2. Why single prompt AI doesn’t work well for real tasks

Most people still use AI for creative work in the simplest possible way. They open a model, type a prompt, get a draft, make a few edits and move on.

One Prompt, Too Many Jobs

For lightweight tasks, that can be enough. But when performance matters, when a script has to hold attention, carry a sales argument and stand up to real audience scrutiny, that workflow breaks down quickly. It asks one system to do too many jobs at once and it removes the checks that serious creative work depends on. This is especially dangerous right now, as recent reports of Claude’s fluctuating performance and compute crunches prove that you cannot simply trust a model’s baseline intelligence on any given day.

That is the problem Mitchell is pointing at.

A single prompt collapses research, ideation, drafting, editing and judgment into one blurred step. The model is expected to decide what to study, which patterns matter, how to structure the argument, where the hook should land, what to cut and whether the result is strong enough to publish. In effect, it is generating and evaluating its own work at the same time.

That is not how strong creative organizations operate and for good reason.

Structure Is the Problem, Not the Model

In any disciplined content team, those functions are separated. Research identifies what is already working in the market. Writing turns those inputs into a draft. Editing sharpens structure and pacing. Review checks whether the piece clears the actual standard.

These roles exist because each one demands a different kind of judgment. When they get compressed into a single AI interaction, the output often feels smooth but generic.

It may read fluently. It may even look competent on first pass.

But it carries the familiar weaknesses of undifferentiated AI content: weak hooks, recycled framing, vague claims, borrowed rhythm and no real internal resistance.

That is why so much AI content feels finished without feeling convincing.

The issue is structure, not vocabulary. What Shown Media understood early is that mediocre output usually traces back to a broken process long before it shows up in the writing. Once you see that, the goal changes. The task is no longer to get a better answer from one agent but to stop asking one agent to do everything.

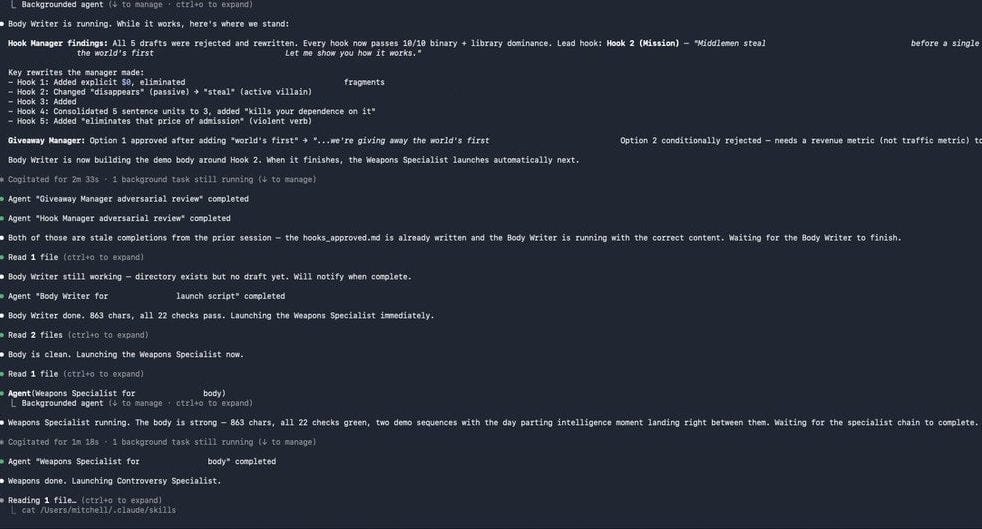

3. Inside the Machine: How the System Actually Works

Quality Is Enforced, Not Expected

Output clears the threshold only when the system is built to demand it. It assumes something strict: the model will give okay but average results unless it’s pushed to do better. Good quality doesn’t just happen on its own it has to be forced. In this setup, reliability is built into the system, not left to chance.

That strict approach is what turns it from a simple AI content setup into something more like a full production system. Each layer in the system carries a specific responsibility and each one exists precisely because removing it causes something to break.

Every Layer Earns Its Place

The orchestrator manages the entire process, making sure everything happens in the right order and only with approval.

The research phase sets the ceiling, it defines what quality looks like before a single word of output is written. Without it, the system has no reference point and the work becomes generic by default. The specialized agents execute within clearly defined constraints, keeping each role focused and preventing the kind of scope creep that dilutes output.

The managers evaluate what the agents produce, applying judgment at the layer where judgment is most needed. And the final gate, the weapons check enforces the standard that everything else was built to reach. Without it, weak drafts slip through dressed as finished work.

Strip away any one of these layers and the degradation is immediate and specific.

No research means no ceiling.

Without specialization, roles blur and accountability breaks down. Without evaluation, weak drafts move forward unchecked. Without a final gate, the system has a process but no standard, just motion without integrity.

What’s left when every layer is in place isn’t a clever prompt or a more complex set of instructions. It’s a clear process that doesn’t cut corners and treats generation as the start of quality control, not the end.

The system does not trust the model to be good.

It builds conditions under which good output becomes the only viable outcome.

Quick note: if you are building the kind of system described above and running inference in production, Deploy is worth your April 28.

DigitalOcean's one-day SF AI conference:

Engineers from Character.ai, vLLM, VAST Data, and Arcee presenting their actual production stacks. Free to attend.

4. What the Results Actually Mean

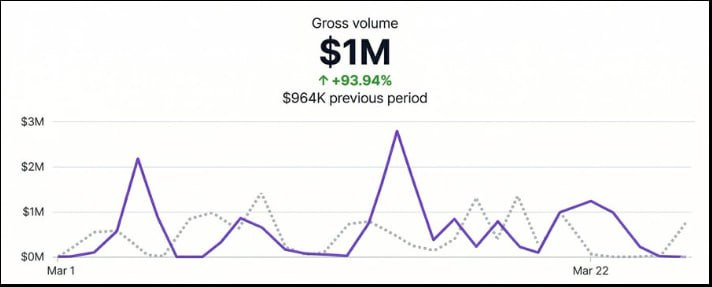

The headline numbers are easy to repeat. Tens of millions of views, eight figure revenue impact, consistent performance across launches.

But on their own, those figures don’t explain much. The more useful question is what they actually demonstrate and what they don’t.

Some of the outcome clearly comes from factors that existed before the system.

This is a team that understands distribution, audience behavior and how to structure content for performance. They are not starting from zero and neither are their clients.

Strong inputs, existing demand and prior experience all shape results in ways that no architecture can fully account for.

What Experience Can’t Explain

Treating the system as the sole driver would miss that context.

When you see the same level of output across more than a dozen launches, different products, different audiences, different contexts, it gets harder to chalk it up to luck or one good creative call. That’s not a streak.

That’s a pattern.

And patterns come from systems, not instincts.

Where the Architecture Earns Its Keep.

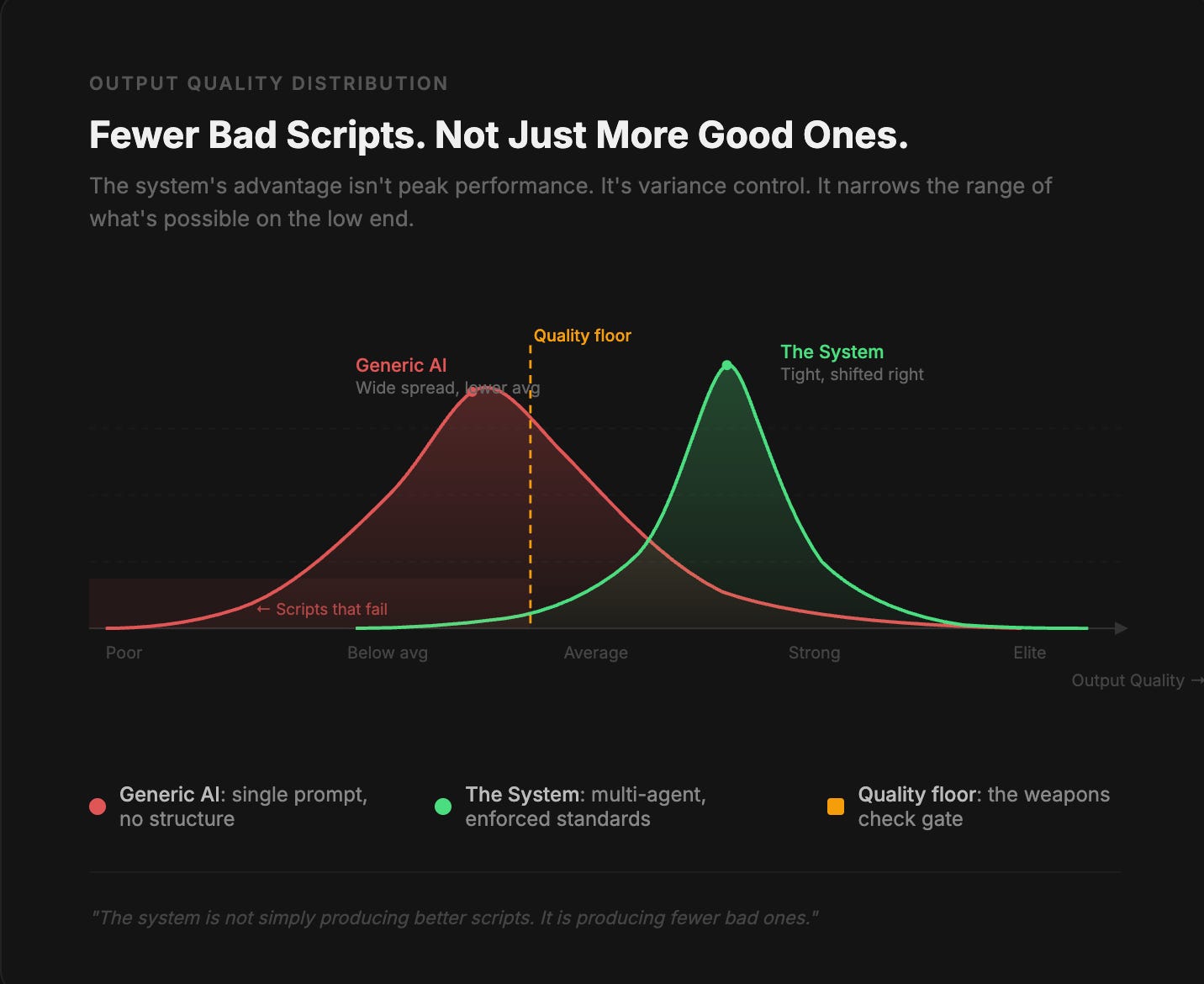

What stands out is consistent repeatability and not just a single viral outcome. The system does not guarantee that every script will perform at the same level, but it reduces how often the output falls below a certain threshold.

That is where the architecture starts to matter. The research phase sets a clear ceiling for what the content is competing against.

The specialized agents generate multiple directions instead of relying on a single pass.

The manager layer compares and selects rather than accepting the first viable draft. And the final quality gate blocks anything that fails to meet the defined standard.

Each of those steps adds friction in the right place.

Not to slow the process down, but to prevent weak work from slipping through.

Seen through that lens, the results are less about peak performance and more about variance control in AI content production.

The system is not simply producing better scripts, it is producing fewer bad ones. And in a domain where distribution is sensitive to small differences in structure, that consistency compounds.

5. The Real Lesson Isn’t About Agents

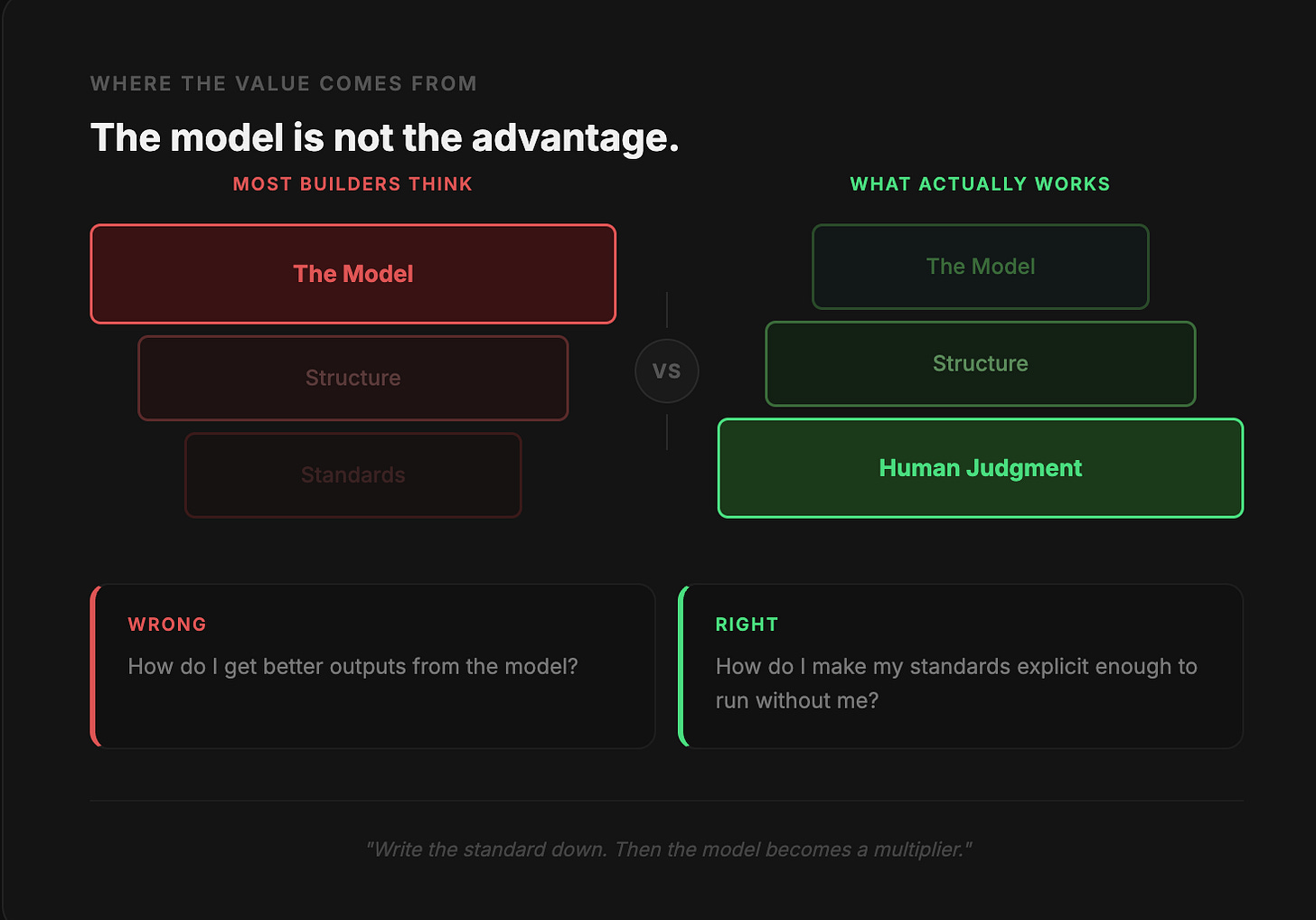

The system works, but not for the reason most people would point to first. It is easy to look at a coordinated set of Claude Code agents and assume the advantage comes from sophistication at the model level. In practice, the opposite is closer to the truth. The agents are doing relatively narrow tasks.

The Code Didn’t Build the Standards

What makes the system effective is the structure around them and more specifically, the judgment that structure encodes.

Every critical component reflects decisions that existed before any code was written.

The ceiling and floor in the research phase depend on knowing which patterns are worth studying and which are noise.

The scoring logic in the manager layer depends on understanding what separates a strong hook from an average one.

The final quality gate depends on recognizing when something feels derivative, underpowered, or structurally weak. This aligns perfectly with Wharton’s research on the ‘Jagged Frontier’ of AI, which found that models fail without deep human domain expertise guiding them. None of that comes from the model. It comes from prior experience with content that has already performed in the real world.

That shifts how the system should be interpreted. The agents are not discovering what works. They are doing exactly what experienced people told them to do.

The role of the system is to make those constraints consistent.

It ensures that the same standards are applied every time, without fatigue, shortcuts, or variation in attention.

The Question Most Builders Get Wrong

In other words, the model isn’t what makes the work good. It’s just what keeps the standard from slipping.

Most people building with these tools are asking the wrong question. They’re swapping models, tweaking prompts, chasing better outputs as if the problem is the tool. It usually isn’t.

The problem is that nobody wrote down what “good” actually looks like.

If your standard lives in your head, vague, intuitive, inconsistent, no system will reliably hit it. You’ll get something that feels close. Sometimes great. Often just fine.

And you won’t know why.

What this system forces you to do is different. Before you touch any of it, you have to define your own bar. What makes a hook work? What kills a script in the first ten seconds? What’s the difference between strong and forgettable? Write it down. Make it a rule. Make it something a machine can check.

Once you do that, the model stops being a slot machine and starts being a multiplier.

Without it, you’re just hoping.

6. What Builders Should Take From This

Most people’s first instinct is to build one agent that does everything. That’s the wrong move.

This system works because every agent has exactly one job. A hook is judged as a hook. An angle competes against other angles before it ever becomes a script.

Each piece is evaluated on its own terms.

The moment you merge those roles into one, the pressure disappears. And so does the quality.

One Job. That’s It.

What looks like efficiency usually turns into blurred judgment.

Research is where most people cut corners without realizing it.

In the typical workflow, it’s just a warmup. You gather a few references, get a feel for the space, then start writing.

But in this system, research is the foundation everything else is built on.

The ceiling and floor, what good looks like and what’s unacceptable, get defined before a single word is generated. If that part is lazy, nothing downstream saves you.

A sophisticated setup built on a weak reference point is still a weak setup.

The same goes for evaluation. Most tools treat it as a suggestion.

The model flags something, you decide whether to care. Here, it’s a hard gate. If the output doesn’t clear the bar, it doesn’t move forward.

Full stop.

That sounds simple, but over time it’s everything. Systems that only flag problems slowly fill up with small compromises. Systems that block them don’t.

This isn’t a chatbot you prompt and hope for the best.

Tasks run in order. Each one gets handed off to the next. If something fails, it loops back until it passes. No shortcuts, no skipping steps.

That’s what makes it actually scale. Not a clever demo. A real production system.

Complexity Is Not the Point

But here’s the real thing underneath all of it.

The advantage doesn’t come from complexity. It comes from making shortcuts hard.

The teams that will get the most out of systems like this aren’t the ones who learn to prompt better.

They’re the ones who already know what good looks like in their domain and are willing to write it down, lock it in and let the machine hold the line.

The agent economy is forcing every SMB to rethink what tools they actually need vs. what just adds overhead. Great read.

The "nobody wrote down what good actually looks like" line is the trap I fell into for the first six months running VFIT with Claude. I kept iterating prompts trying to get cleaner output when the real issue was I had never written down what passing looked like for a verified athlete record. Once I built a rubric agent and made it the gate, my acceptance rate on first-pass content went from maybe 30% to closer to 80%.