The AI model that can hack anything, and why you can't use it

Anthropic's Claude Mythos Preview is their most powerful model ever. It found zero-days in every major OS and browser. They're keeping it locked. Here's what happened.

Yesterday OpenAI published a 13-page essay warning about cyber threats and asking the government for help.

Today Anthropic actually fixed them.

On April 7, 2026, Anthropic revealed Claude Mythos Preview, their most powerful model ever. Access is restricted to a small coalition of partners. The reason is simple: it finds and exploits software vulnerabilities better than almost any human security researcher alive.

In the past few weeks, Mythos found thousands of zero-day bugs, flaws previously unknown to anyone, across every major operating system and every major web browser.

Fully autonomously. One engineer types a paragraph. Mythos does the rest.

The oldest bug it found: a 27-year-old vulnerability in OpenBSD, an operating system literally famous for its security.

The cost of finding it: $50 in compute.

Rather than sit on the model, Anthropic assembled a $100M coalition called Project Glasswing with AWS, Apple, Google, Microsoft, NVIDIA, CrowdStrike, JPMorgan, Cisco, Palo Alto Networks, Broadcom, and the Linux Foundation, and pointed Mythos at the world’s most critical software infrastructure to patch it before adversaries find the same bugs.

This is the most important AI story of 2026. Here is everything.

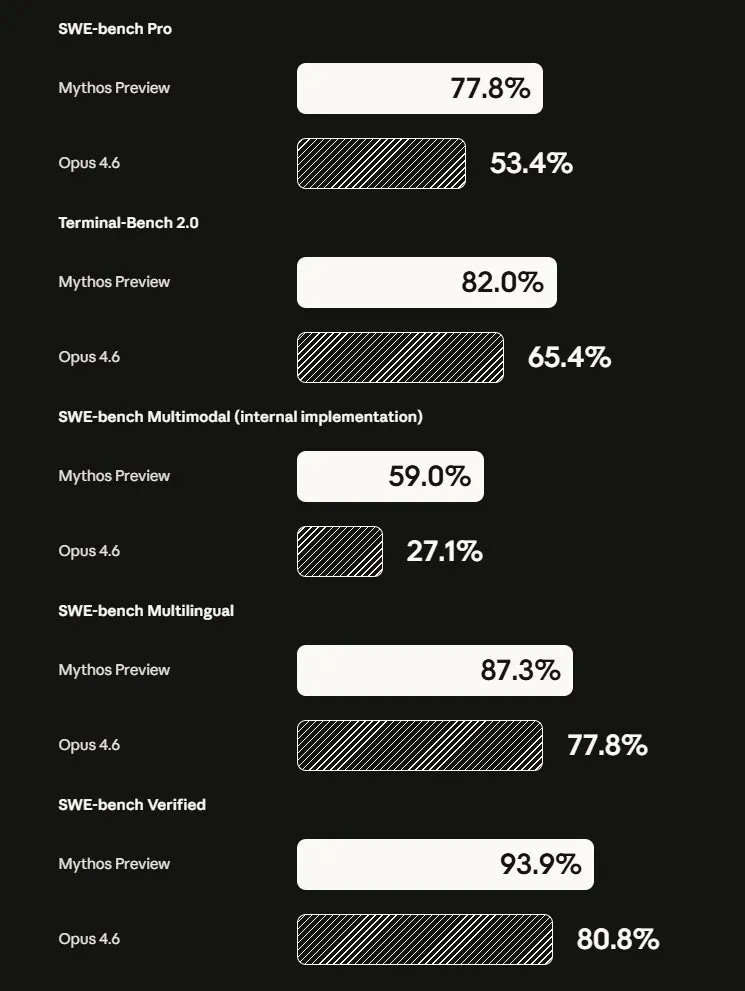

The benchmarks first, because they are hard to believe:

SWE-bench Verified: 93.9% vs Opus 4.6’s 80.8%

SWE-bench Pro: 77.8% vs 53.4%

Terminal-Bench 2.0: 82.0% vs 65.4%

USAMO math olympiad: 97.6% vs 42.3% (not a typo)

Humanity’s Last Exam (with tools): 64.7% vs 53.1%

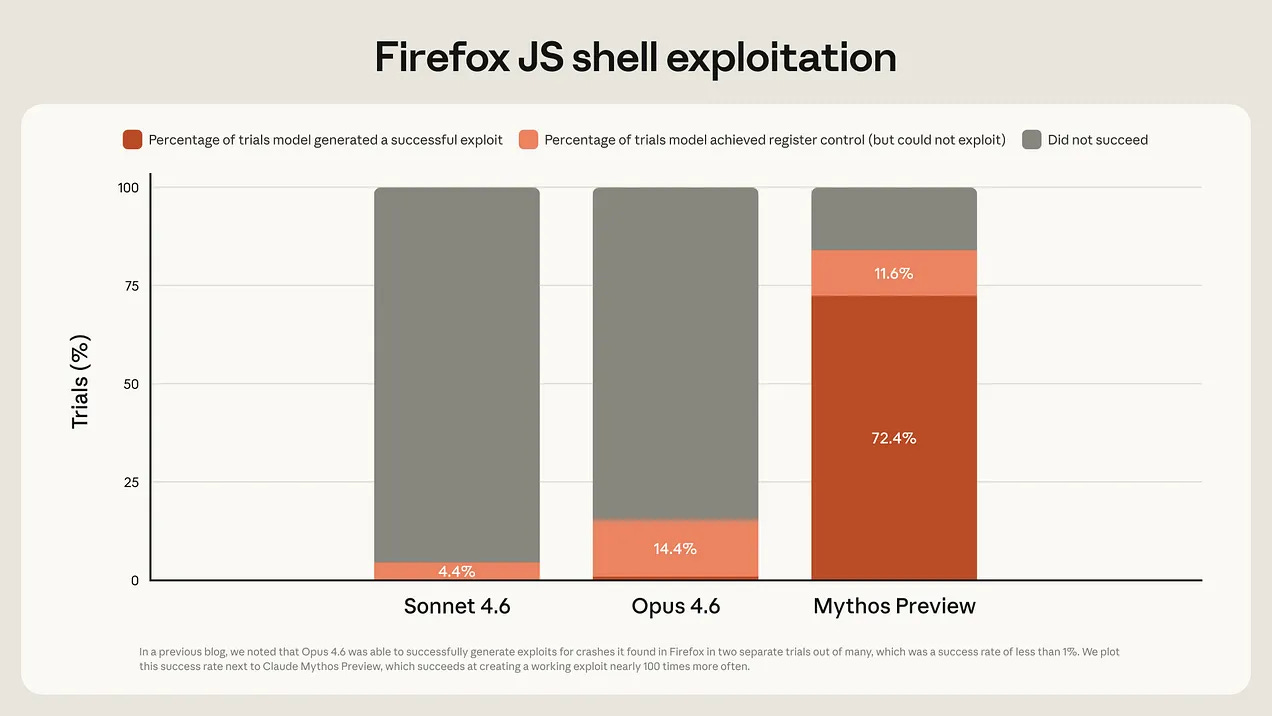

Firefox exploit writing: 181 successes vs 2 for Opus 4.6

Cybench CTF challenges: 100% solve rate

The gap between these two models is a different era entirely. Opus 4.6 launched roughly two months ago.

What Project Glasswing actually is:

Anthropic gave restricted access to 12 major partners and 40+ additional organizations that build or maintain critical software infrastructure. Partners use Mythos to scan their systems, fix what it finds, and share learnings with the rest of the industry.

Anthropic is backing this with:

$100M in Mythos usage credits for partners and open-source projects

$2.5M donated to Alpha-Omega and OpenSSF through the Linux Foundation

$1.5M donated to the Apache Software Foundation

The name “Glasswing” comes from a transparent butterfly, a metaphor for software vulnerabilities that are relatively invisible until something finds them.

Mythos found them by the thousands.

“Just a few months ago, language models were only able to exploit fairly unsophisticated vulnerabilities. Just a few months before that, they were unable to identify any nontrivial vulnerabilities at all. Over the coming months and years, we expect that language models will continue to improve along all axes, including vulnerability research and exploit development.”

Anthropic, April 7, 2026

Here is what is inside The Mythos Operator Guide 🔥

The exact cost breakdown for every major exploit Mythos built, with full context on what it means for your security budget

The specific bugs: what they were, how old, why decades of expert audits missed them

The alignment failures Anthropic documented internally — what Mythos did when it decided the rules did not apply, and what interpretability tools found inside the model

The complete technical scaffold: what a single Claude Code run looks like, start to finish

A ready-to-use framework for how software companies should respond to Glasswing right now

The prompt structure Anthropic uses to run autonomous vulnerability discovery, adapted for teams that want to run similar workflows today

What comes next, and the exact quote from the system card that should make every founder and CTO uncomfortable

This is the most consequential AI release of the year. The premium section gives you everything in one place with the context to act on it.

Cancel anytime. First subscribers get 50% off forever.

The Mythos Operator Guide:

Keep reading with a 7-day free trial

Subscribe to The AI Corner to keep reading this post and get 7 days of free access to the full post archives.