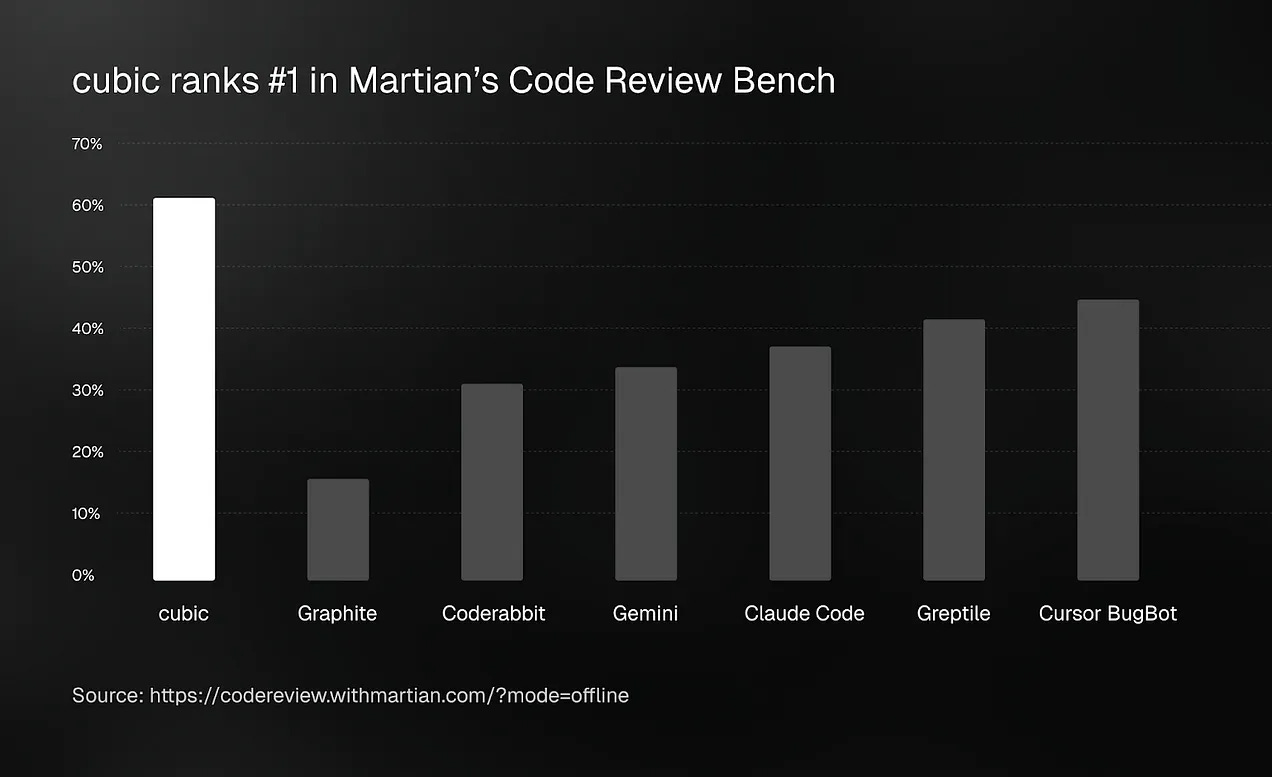

A YC Startup Just Beat Claude Code, Cursor, and Gemini at Code Review

The gap between first and second place is bigger than the gap between second and last.

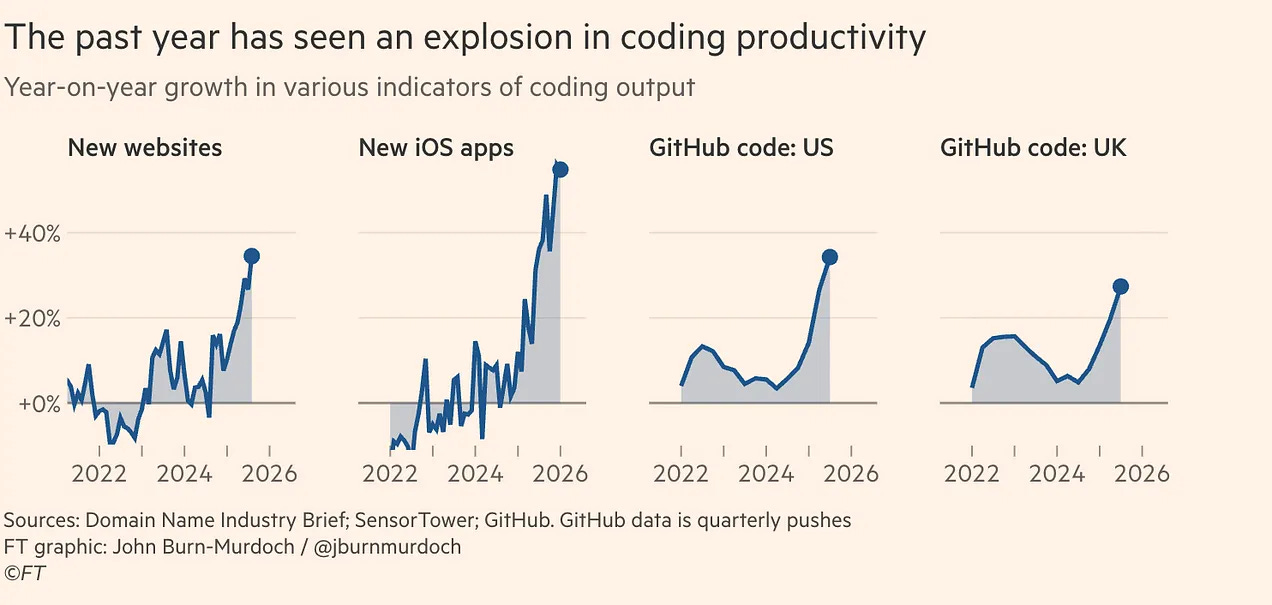

Everyone is racing to write code faster.

cubic is racing to review it better.

That turned out to be the smarter bet.

cubic (YC X25) just ranked #1 on Martian’s Code Review Bench, the first independent benchmark built to test AI code reviewers. Against Claude Code, Cursor BugBot, Gemini, and CodeRabbit.

It was a landslide.

The numbers

Why this matters right now

AI coding tools tripled how fast developers write code. The review side stayed the same.

More PRs. Same number of engineers. Bugs slipping through on teams stretched too thin.

cubic puts specialized AI agents on every pull request automatically. Multiple agents, each with one job, running in sequence. And when a bug is hard to find, cubic runs for 24 hours straight to track it down.

That is what caught 11 critical vulnerabilities in a Cloudflare plugin shipped to a FedRAMP government property. Human review missed all of them.

Guillermo Rauch (Vercel’s CEO) posted about it (72,000 views)

“Another critical vulnerability was disclosed today, in addition to the 10 we reported within the first day, which they shipped to a .gov FedRAMP’d property.”

The traction

▫️ $0 to $1M ARR in under a year

▫️ 250,000+ repositories under review

▫️ Customers: n8n, Granola, Resend, Legora

These are serious engineering teams. They chose cubic.

“If your team is on cubic and your competitors aren’t, that’s an unfair advantage.”

Paul Sanglé-Ferrière, founder and CEO

The benchmark makes that very hard to argue with.

The AI Code Review Playbook: How to Ship Faster Without Shipping More Bugs

The complete workflow for teams using AI to write code. How to structure reviews, what to catch before cubic runs, how to combine AI writing and AI review into a system that actually holds up in production.

Here is exactly what is inside:

AI coding workflow that holds up in production — the exact sequence for going from prompt to merged PR without introducing bugs at speed

PR hygiene rules that make AI review work better — what to do before the AI reviewer runs so the feedback is actually useful

8 categories of bugs AI-generated code gets wrong most often — with specific examples and how to prompt your way around each one

security checklist for AI-generated code — the vulnerabilities that show up consistently in vibe-coded and LLM-assisted projects, and how to catch them before they ship

prompts that produce more reviewable code — how to write prompts for Claude Code and Cursor that generate code structured for easier review, not just faster output

how to brief cubic for maximum coverage — the setup steps that make the biggest difference in what cubic catches

the team workflow for AI-native engineering teams — how to structure roles, review queues, and escalation paths when AI is writing most of the code

Cancel anytime. First subscribers get 50% off forever.

The AI Code Review Playbook

1. The AI coding workflow that holds up in production

Most teams using AI to write code have the same problem:

Keep reading with a 7-day free trial

Subscribe to The AI Corner to keep reading this post and get 7 days of free access to the full post archives.