Karpathy Let an AI Agent Tune His Code for 2 Days. It Found 20 Things He Missed.

The architecture behind autonomous agents that actually work. Plus 5 principles you can still

I’ve been following Andrej Karpathy’s work for years. When he releases something, I pay attention.

Last week he shared results from a small experiment that stopped me mid-scroll.

He pointed an AI agent at his neural network training code and let it run for two days. The code was already well-tuned. He’d spent months on it. This is literally what he does for a living and has done for two decades.

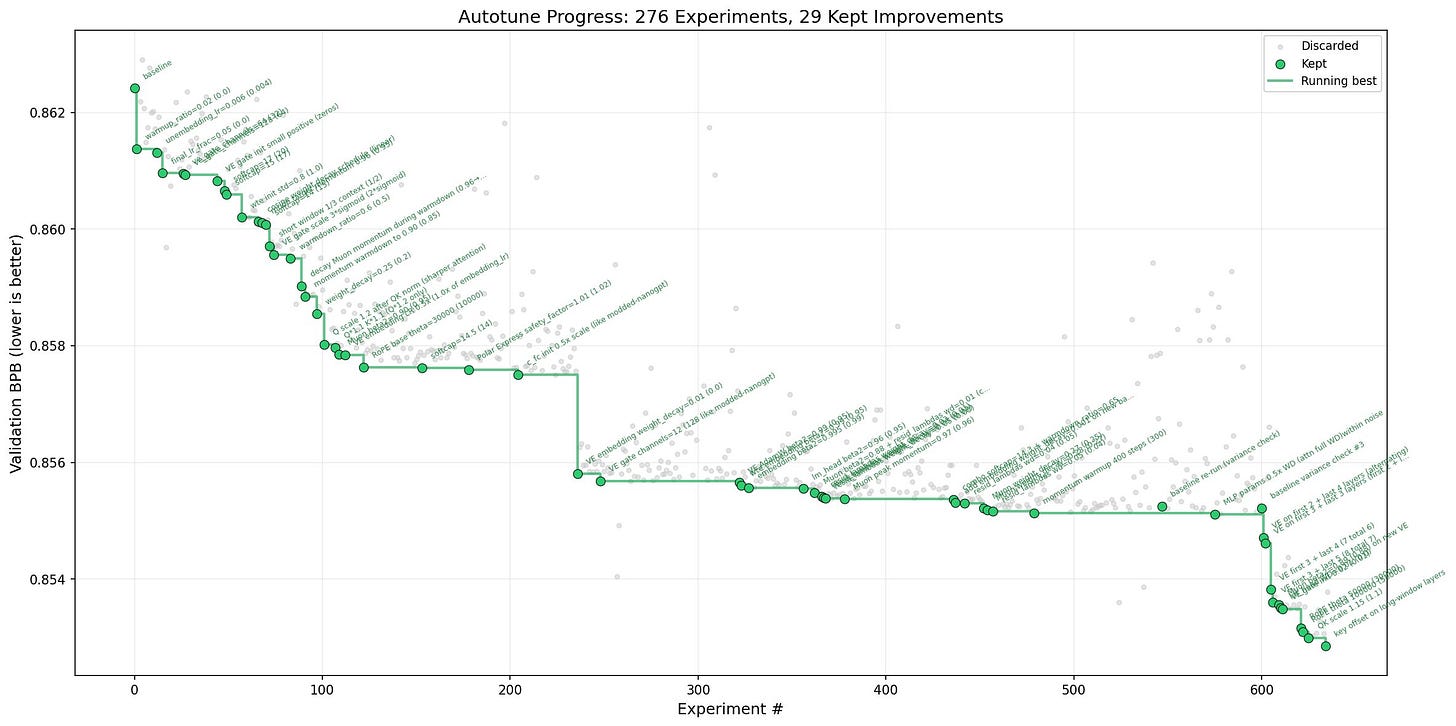

The agent found 20 improvements he’d missed.

Every single one was real. They all stacked together. They all transferred when he tested them on larger models. His benchmark dropped from 2.02 hours to 1.80 hours. That’s 11% faster on code he thought was already optimized.

Here’s what got me:

the agent ran about 700 experiments autonomously. It looked at what worked, what failed, and planned the next experiment based on the pattern.

No human in the loop. Just iterate, measure, keep or discard, repeat.

Some of what it caught:

His attention mechanism was too diffuse because a scaler multiplier was missing. The agent found better multipliers and flagged it for future work.

His Value Embeddings needed regularization. He wasn’t applying any. His word: “oops.”

His banded attention settings were too conservative. He’d forgotten to tune them.

His AdamW optimizer betas were wrong. Weight decay schedule needed work. Network initialization needed adjustment.

All of this after months of manual tuning by one of the best ML researchers in the world.

His take: “All LLM frontier labs will do this. It’s the final boss battle.”

What’s in our full breakdown:

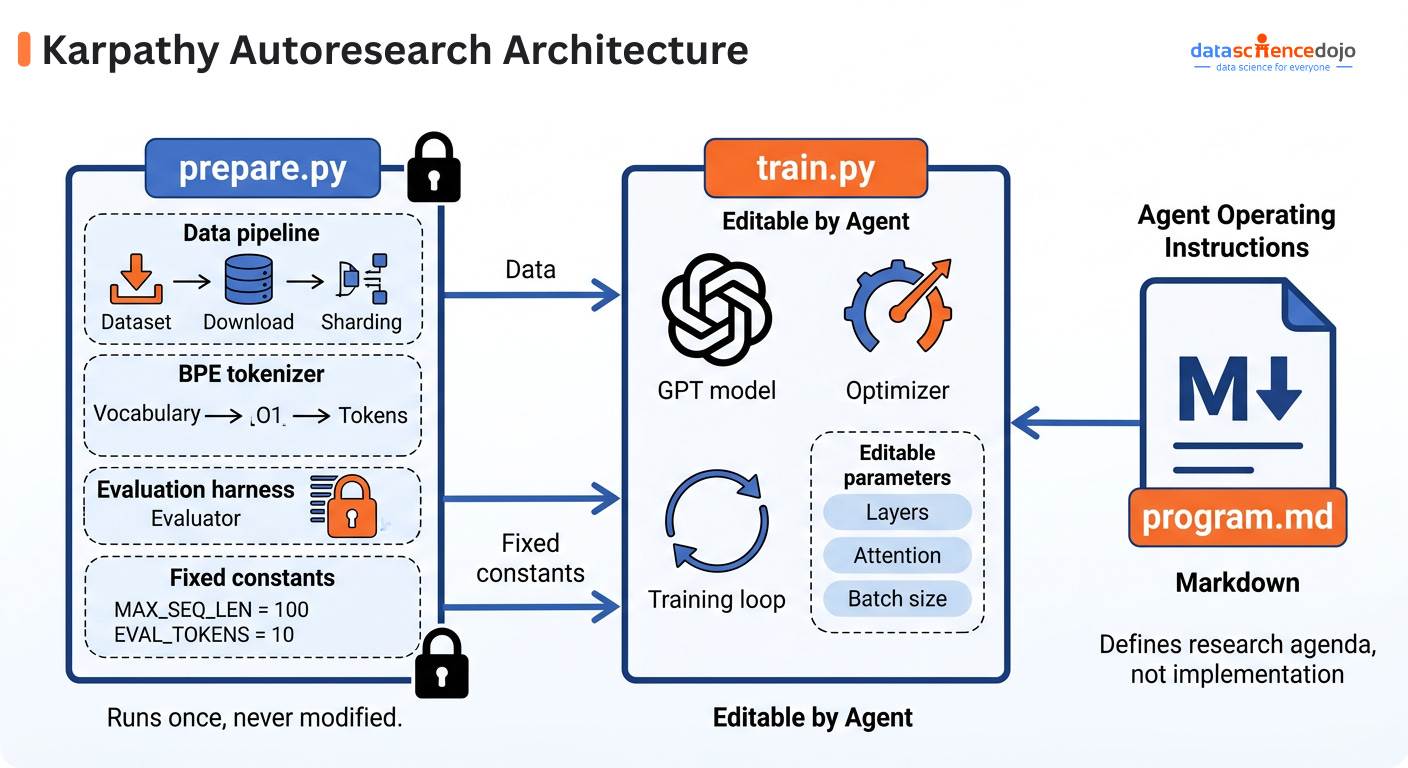

→ The 3-file architecture that makes this kind of autonomous iteration possible

→ Why locking down the evaluation harness is the key decision (prevents the agent from gaming its own benchmark)

→ The keep-or-reset mechanism that turns chaotic exploration into clean evolutionary search

→ How fixed time budgets force agents to find changes that actually matter in practice

→ Failure handling that lets systems run for days without supervision

→ 5 design principles you can apply to any agent system you’re building

→ Why this pattern works for any metric that’s efficient to evaluate

The repo is about 200 lines of code. The ideas transfer to anything you’re building with agents.

Start your free trial today. Cancel anytime. First subscribers get 50% off forever!

Keep reading with a 7-day free trial

Subscribe to The AI Corner to keep reading this post and get 7 days of free access to the full post archives.