Making AI Take the Work Seriously 💫

a practical technique for improving reasoning, structure, and decision making

Something subtle shifted the quality of my AI outputs during a screenshare. I added one extra line to a prompt, almost as a joke, and the response that came back felt immediately different, with clearer reasoning, tighter structure, and decisions that sounded considered rather than padded.

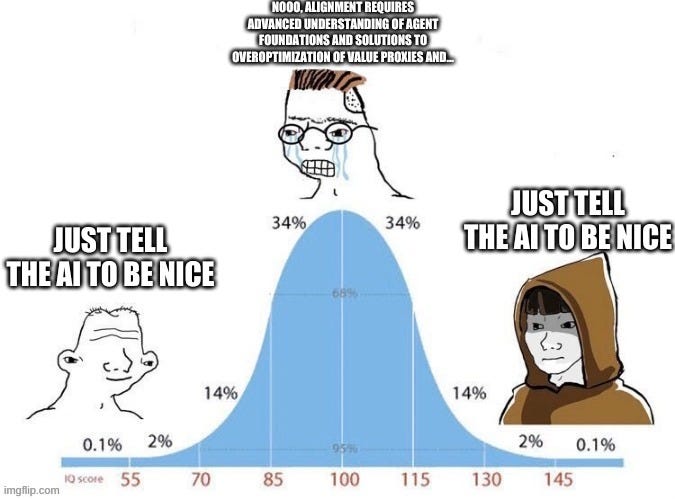

It brought to mind that familiar meme about “just telling the AI to be nice”, simple on the surface, almost dismissible, yet capable of changing behavior in a meaningful way. I tried the same approach again later, and the effect repeated itself.

The task itself stayed identical, using the same model, the same context, and the same request. What changed was the situation implied by the prompt, which introduced visibility, timing, and consequence into the frame.

The model adjusted accordingly. Writing became more precise, analysis more opinionated, code reviews more attentive to edge cases, and explanations felt ready to be shared rather than refined further.

After noticing this pattern a few times, it becomes difficult to ignore. The model shifts from drafting mode into delivery mode, and the overall quality baseline quietly moves up.

This article explains why this “trick” works and how to use it deliberately.

You get:

what’s happening inside the model when this shift occurs

when this improves quality and when it adds friction

how to apply it safely in daily work

three prompt injections you can add once and reuse

versions tuned for ChatGPT and Claude

The trick, explained simply (you’ll love it)👇

Keep reading with a 7-day free trial

Subscribe to The AI Corner to keep reading this post and get 7 days of free access to the full post archives.