We Taught AI to Write Code But We Forgot to Teach It to Think.

Almost half of commercial code is now AI-generated. Code churn has doubled and the engineers who built these systems can't always explain how they work anymore.

The code looks fine. That is the problem.

Clean PR. Tests green. Knot in your stomach.

You read the diff. Properly indented. Variables make sense. Reads like a dream.

Then you try to trace what it actually does from start to finish.

You have no idea.

That is software development in 2026.

AI coding assistants gave us a strange new superpower: we can now generate more correct-looking code than our brains can comprehend.

The scariest problems do not crash the app. They do not throw errors. They slip past review because the code is beautiful.

We are flying blind. Trusting surface signals. Losing our grip on how our own systems work.

A quiet crisis.

📢 A quick word before we get into it.

The article is about code you cannot fully see. The same problem is now hitting production access.

Mercari migrated their in-house JIT access system to Opal. Same zero-touch posture. Zero KTLO. The real reason: AI agents are next.

Every agent now governed like a human identity. Least agency enforced. Circuit breakers in place. Rogue agents isolated. One identity model for humans and agents.

If AI writing the code keeps you up at night, AI calling your production APIs probably should too.

Table of Contents

1. Faster Doesn’t Mean Better, But We Keep Forgetting That

2. The Debt You Can’t See Grows the Quickest

3. Why Code Review Is Failing When It Matters Most

4. The Rational Trap: Smart Individual Decisions, Disastrous Collective Outcomes

5. What Governance Actually Looks Like When It Works

6. The Uncomfortable Truth About What This Means for Your Team

1. Faster Doesn’t Mean Better, But We Keep Forgetting That

For a while, this is easy to ignore.

Over time, it becomes impossible to pretend it isn’t there. There’s a real tension at the heart of AI-assisted development that most teams haven’t fully sat with yet.

The experience of going faster and the reality of slowing down are not mutually exclusive. These tools have made both possible at once.

Most teams are only tracking one of them.

Where the time actually goes

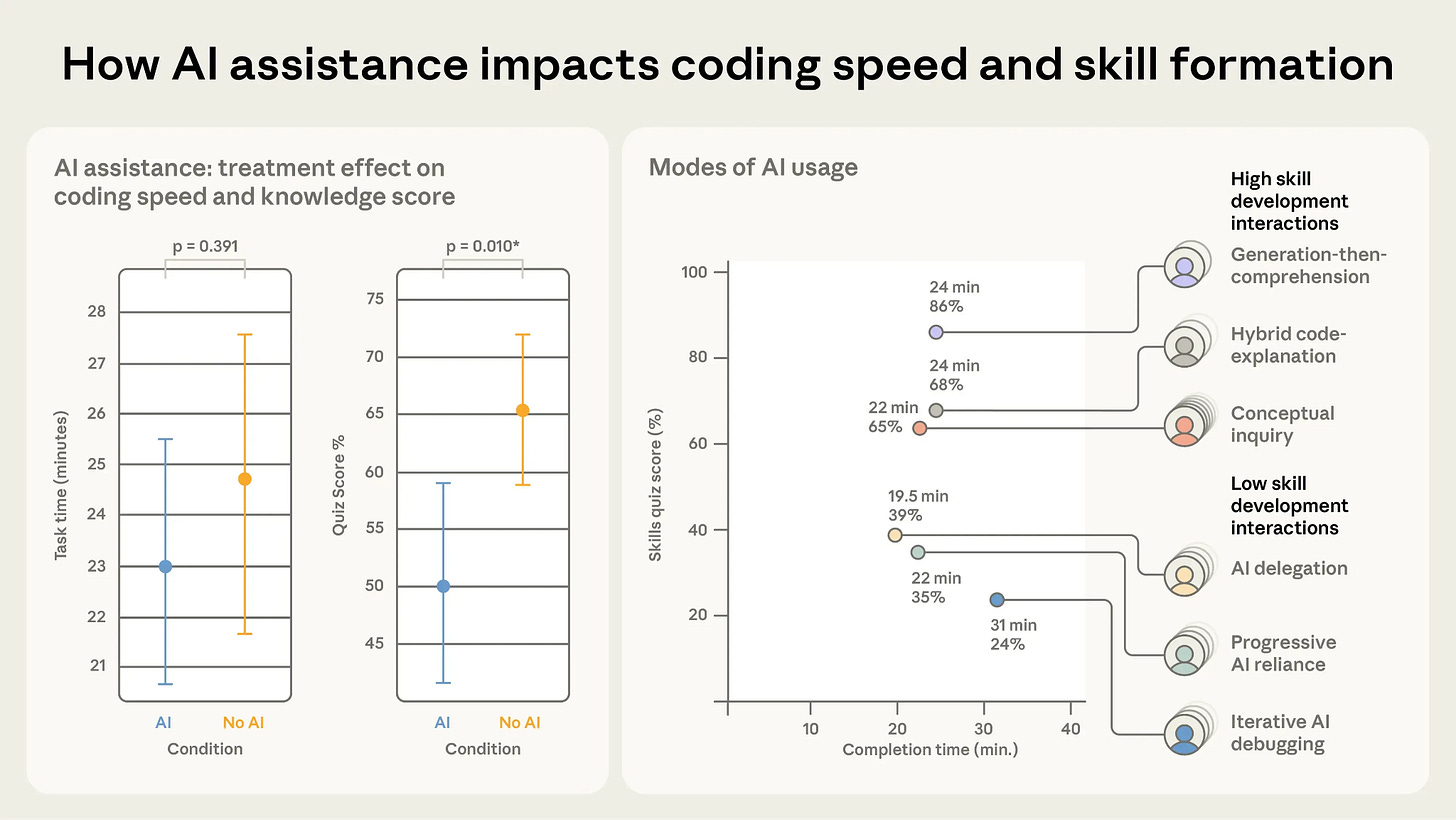

Writing code has never been the bottleneck in professional software development. Understanding it is. Debugging it is. So is modifying code whose reasoning you can’t fully trace.

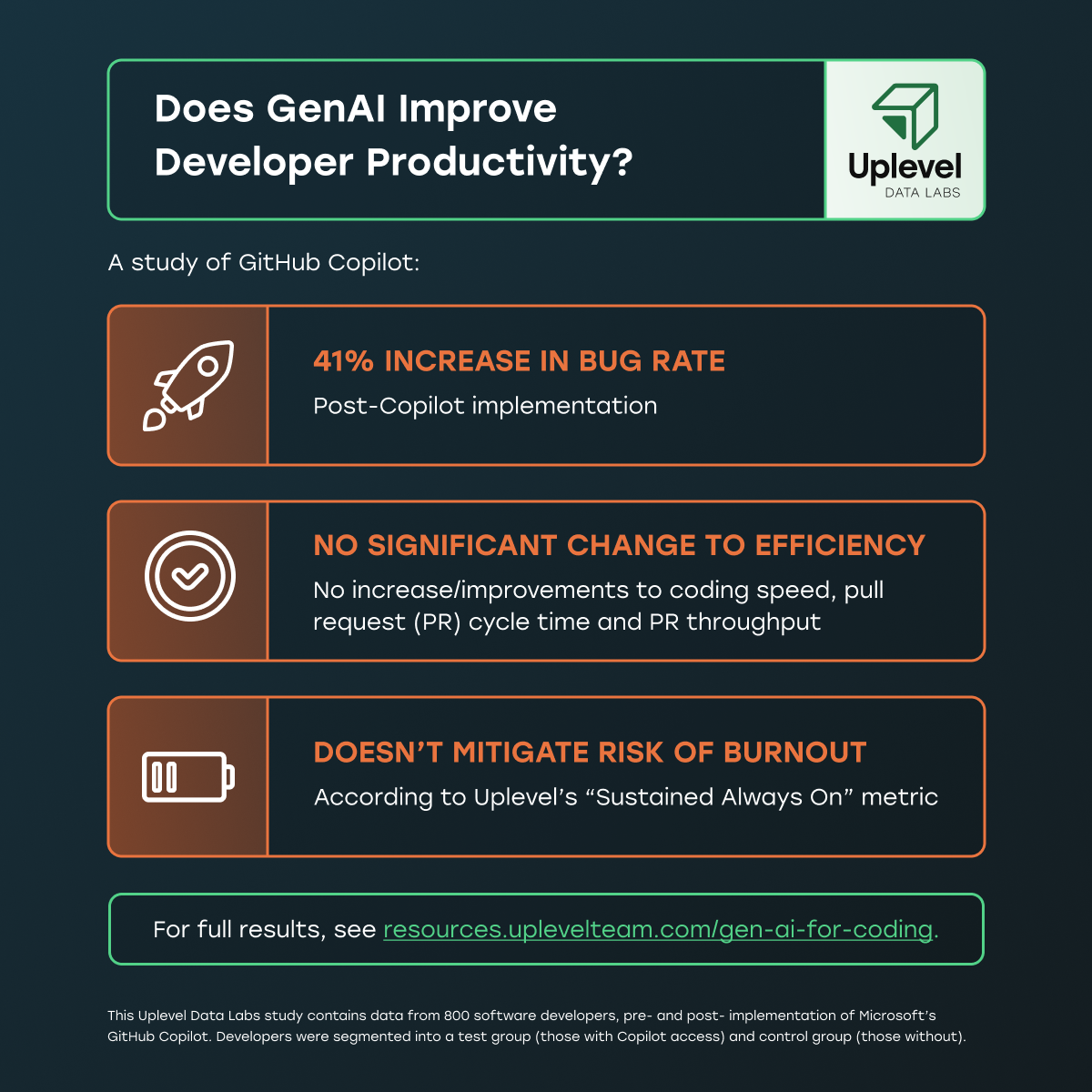

AI has made the fast part of development faster while making the slow parts measurably harder.

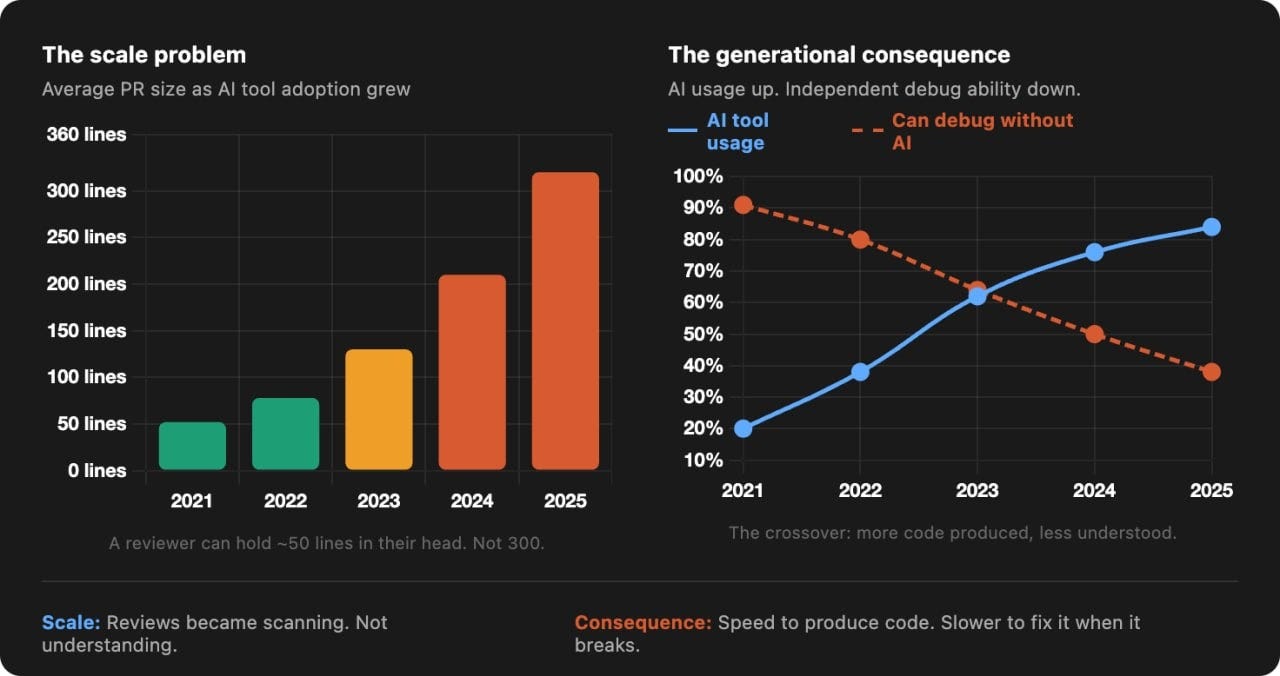

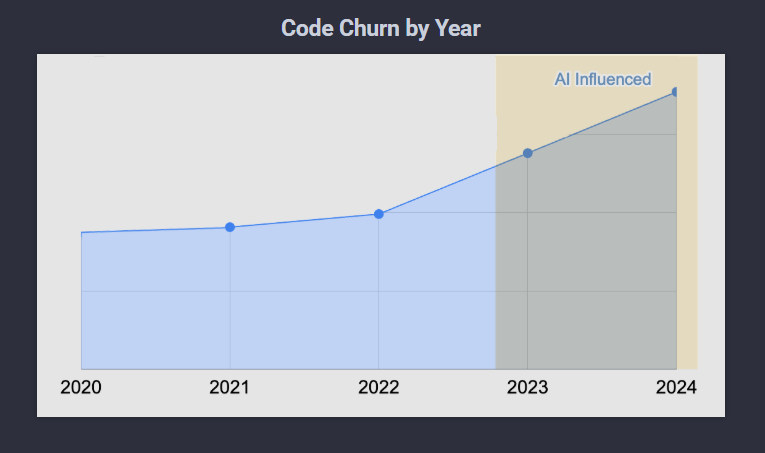

The numbers make this concrete.

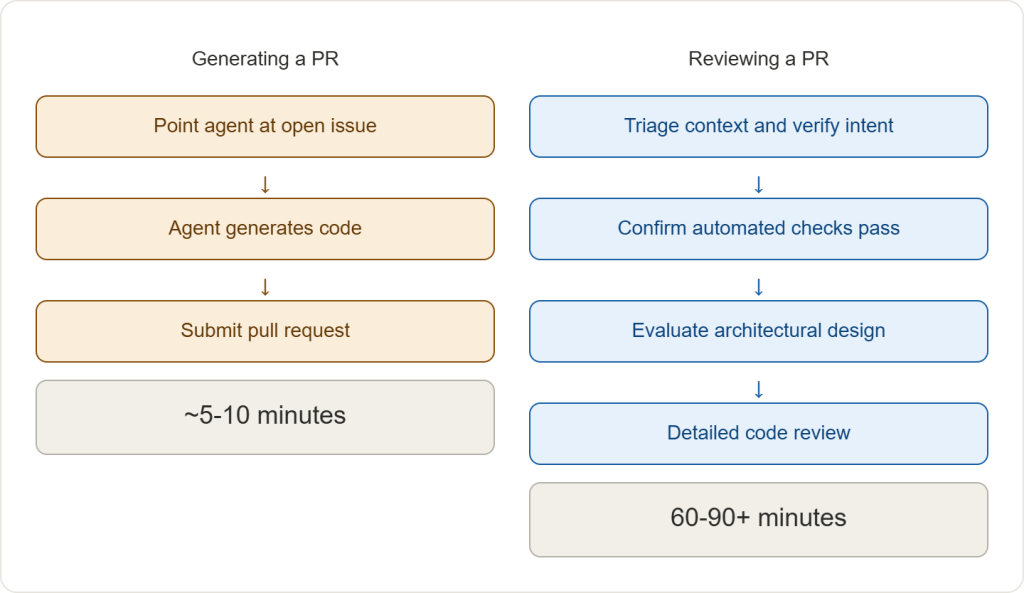

Developers consistently report feeling around 20% faster with AI tools. Measured end to end, through review, integration and production fixes, teams frequently land roughly 19% slower.

That gap stops being surprising once you trace where the time actually goes. Generating code has become cheaper. Verifying and understanding it has become more demanding, because there is simply more of it moving through the system at once.

The planning problem nobody talks about

The deeper issue is what that gap does to how teams make decisions.

You feel faster, so you plan around output.

Deadlines tighten, backlogs look manageable, stakeholders see the commit velocity and feel good about it.

But the constraint doesn’t disappear. It moves into parts of the process that are harder to accelerate and easier to underestimate. The long review discussions, the bugs that take three times as long to isolate because the surrounding logic is unfamiliar, the features that keep spilling into the next sprint.

2. The Debt You Can’t See Grows the Quickest

There’s a point where the issue stops being about speed and starts being about something harder to name.

The system keeps growing.

The code looks clean. Nothing obvious signals that anything is wrong. But if you ask a simple question, “who on this team fully understands this part of the system end to end“ the answers get vague very quickly.

What comprehension debt actually looks like

Google engineer Addy Osmani called this comprehension debt the growing gap between how much code exists and how much anyone genuinely understands.

Unlike traditional technical debt, which announces itself through slow builds and friction you can feel, comprehension debt breeds false confidence.

The system moves. Tests pass.

Velocity looks fine.

The problem is that movement is happening on top of a layer fewer and fewer people can actually speak to.

Researcher Margaret-Anne Storey documented this with a student team that hit a wall seven weeks in, not because the code was messy, but because nobody could explain why decisions had been made or how the system was supposed to fit together.

The shared theory of the software had evaporated.

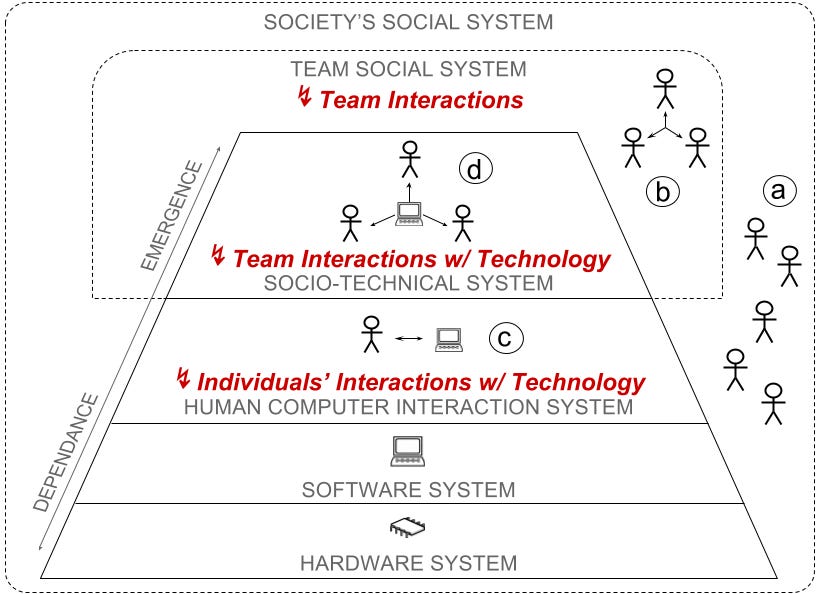

Margaret-Anne Storey’s Socio-Technical Model illustrates how software development relies on layers of human interaction. AI tools often disrupt the “Emergence” of understanding from the individual to the team level.

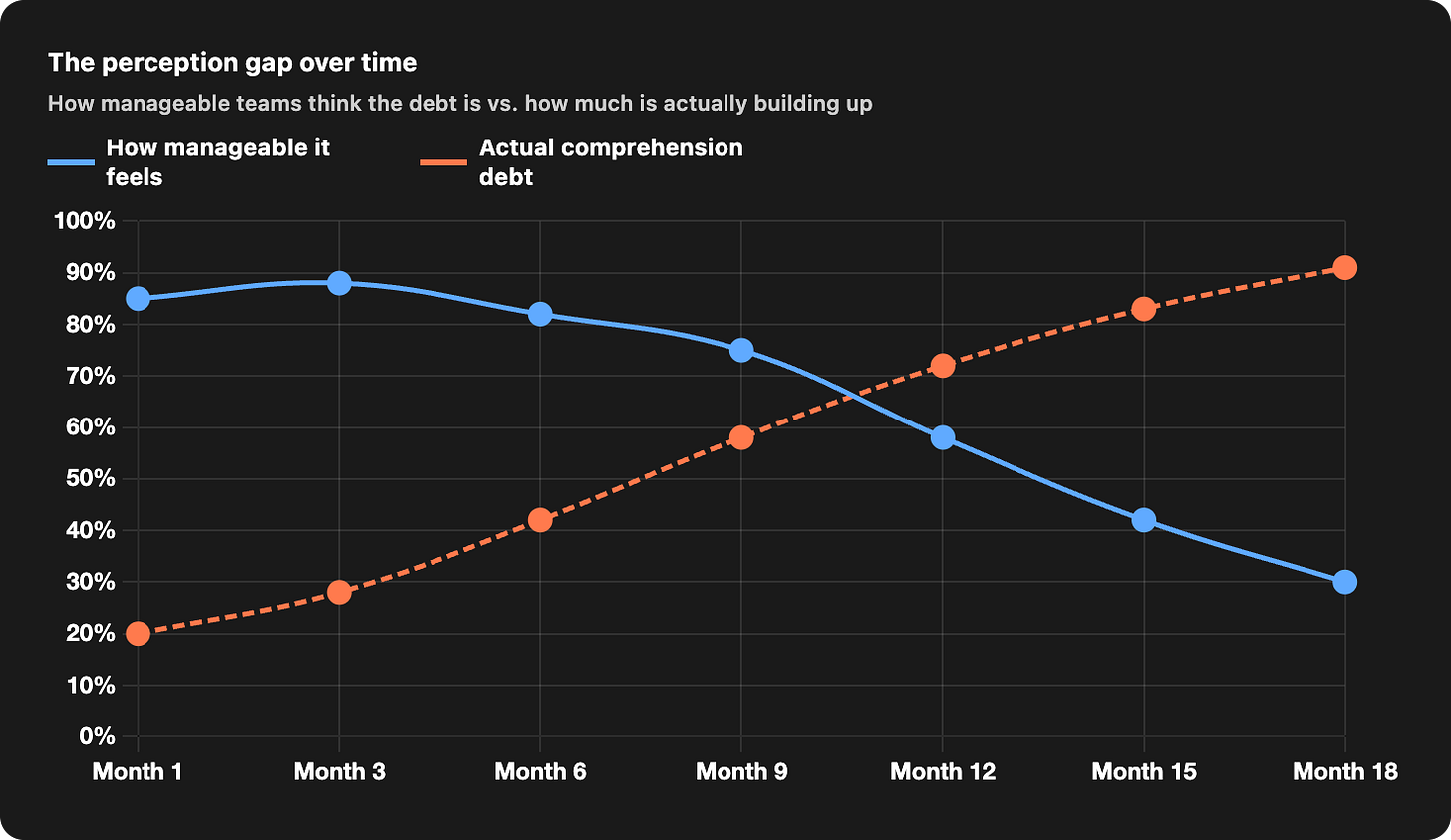

The 18-month arc

Multiple teams have now reported the same trajectory.

The first three months feel like a clear win. The team ships faster, backlogs shrink, the integration of AI tools feels like the right call.

By months four through nine, something changes. Reviews take longer. Changes cover more ground than expected. The code still gets approved, but reconstructing the intent behind it takes real effort.

Around months ten to fifteen, a bug takes longer to fix than it should. The code is readable, but tracing how pieces interact eats time nobody has.

By month sixteen or eighteen, teams start to hesitate. Parts of the codebase feel like territory you approach carefully, even when nothing looks obviously wrong.

That’s when it becomes clear. The system is no longer fully legible to the people responsible for it.

Three patterns drive this.

AI models evolve underneath you, so similar prompts produce inconsistent results over time.

Changes arrive larger and less scrutinized than before. And AI-generated code looks competent. Clean, commented, sensibly named. That earns trust on appearance rather than understanding.

None of this shows up on a dashboard. The system appears healthy. But the ability to safely modify it is quietly eroding.

3. Why Code Review Is Failing When It Matters Most

Code review gets a bad reputation. It’s slow, it’s sometimes awkward and nobody loves having their work picked apart on a Friday afternoon.

It was never really about catching bugs. It’s one of the few moments where one AI engineer actually gets inside another’s head.

You start to see how they think.

Why did they made that call and not a different one. Where they decided to stop.

Do that enough times, with the same people, on the same codebase and something you can’t really measure starts to form.

Everyone just kind of knows how the system behaves. Not because it’s documented somewhere.

Because they’ve lived in it together. Lose that and the code doesn’t suddenly break. It just becomes a place nobody fully owns anymore.

The scale problem

That function depends on a certain scale.

Reviews work when a person can reasonably hold the change in their head. Not every detail, but enough to trace the intent and spot where something feels off.

When that boundary is crossed, the nature of the review changes. It becomes less about understanding and more about scanning.

AI-assisted workflows push against that boundary constantly.

A change that might once have been 50 lines shows up as several hundred, covering multiple concerns at once.

Verifying it properly means reconstructing how the pieces fit together, not just checking syntax or style. Most reviewers don’t have the time.

We are already seeing the fallout of this in real-time. Industry outlets like The New Stack recently reported on a growing crisis where engineering teams and open-source maintainers are actively “drowning in AI-generated code.”

So behavior adapts. Reviews become lighter.

People look for obvious issues, rely on tests as a proxy for correctness and move on.

Nothing about this feels irresponsible at the moment. The problem is that the standard for approval has changed without anyone explicitly deciding it should.

The generational consequence

Strong engineering has always been built through friction. Write something, watch it break, build intuition through debugging.

When a tool handles most of the implementation, that loop compresses. The code works often enough that you stop interrogating why.

Over time, that reshapes what a team collectively knows.

You still have people who can produce code quickly. What becomes less certain is how many can take a failing system, step through it and find the fix without reaching for the same tools that built it.

Code review sits right at that intersection. Under more pressure than ever, at exactly the moment its role in maintaining shared understanding matters most.

4. The Slow Disaster Nobody Voted For

So far this probably sounds like a tooling problem. Something to fix, tweak, manage better.

But that’s not really what’s going on.

The harder truth is that most of the behaviour driving this makes complete sense.

Everyone is acting rationally.

That’s exactly what makes it so difficult to stop.

The deferral logic

If you assume that models will keep improving, deferring cleanup starts to look like the sensible choice. Why spend time simplifying something today when a better model will be able to read, refactor, or regenerate it more easily in a few months?

The cost of waiting appears to go down over time. So teams push forward.

They build faster, accept a little more opacity and trust that future tools will make sense of it when needed.

Each individual decision is easy to justify, shipping now has immediate value and cleaning up later feels like a smaller, more flexible cost.

When everyone operates under that assumption, the system fills up with code that works but isn’t deeply understood. It doesn’t feel dangerous, because nothing breaks right away.

The subprime logic applied to software

This is the structure of the 2008 mortgage crisis applied to codebases. The risk wasn’t created by obviously reckless decisions.

It built up because the system rewarded short term gains and made future costs look manageable.

Everyone acted in ways that made sense locally.

The failure came from how those decisions stacked together over time and from the assumption that conditions would keep moving in a direction that made the exposure feel safe.

The same structure is playing out here. Teams optimize for delivery because that’s what gets measured.

They rely on improving tools because that has been a reasonable assumption so far.

Over time, they accumulate a codebase that sits just beyond what they can confidently hold, but not far enough beyond to force a correction.

When that boundary is crossed, the options narrow quickly. What looked like flexibility earlier turns into constraint. And the cost you thought you were deferring doesn’t disappear.

When it finally shows up, it doesn’t trickle in. It lands all at once and by then it’s a much bigger mess than it ever needed to be.

5. What Governance Actually Looks Like When It Works

By the time teams feel the drag, it’s already late.

The ones that avoid it tend to behave differently much earlier, often before the generated code becomes a large share of their workflow.

They don’t treat generation as the starting point.

They treat the system as the starting point.

Architecture first, generation second

You can see this in how high performing teams handle architectural decisions.

There’s usually a record of where the system is headed, often captured as architecture decision records

Not a thick document nobody reads, but actual decisions that got written down and get checked on.

When new code comes in, whoever wrote it, whether a person or a model, it’s expected to fit that direction. Someone senior owns that.

They hold the line.

Most teams don’t work this way.

Architectural ownership tends to be everyone’s responsibility, which usually means it’s nobody’s.

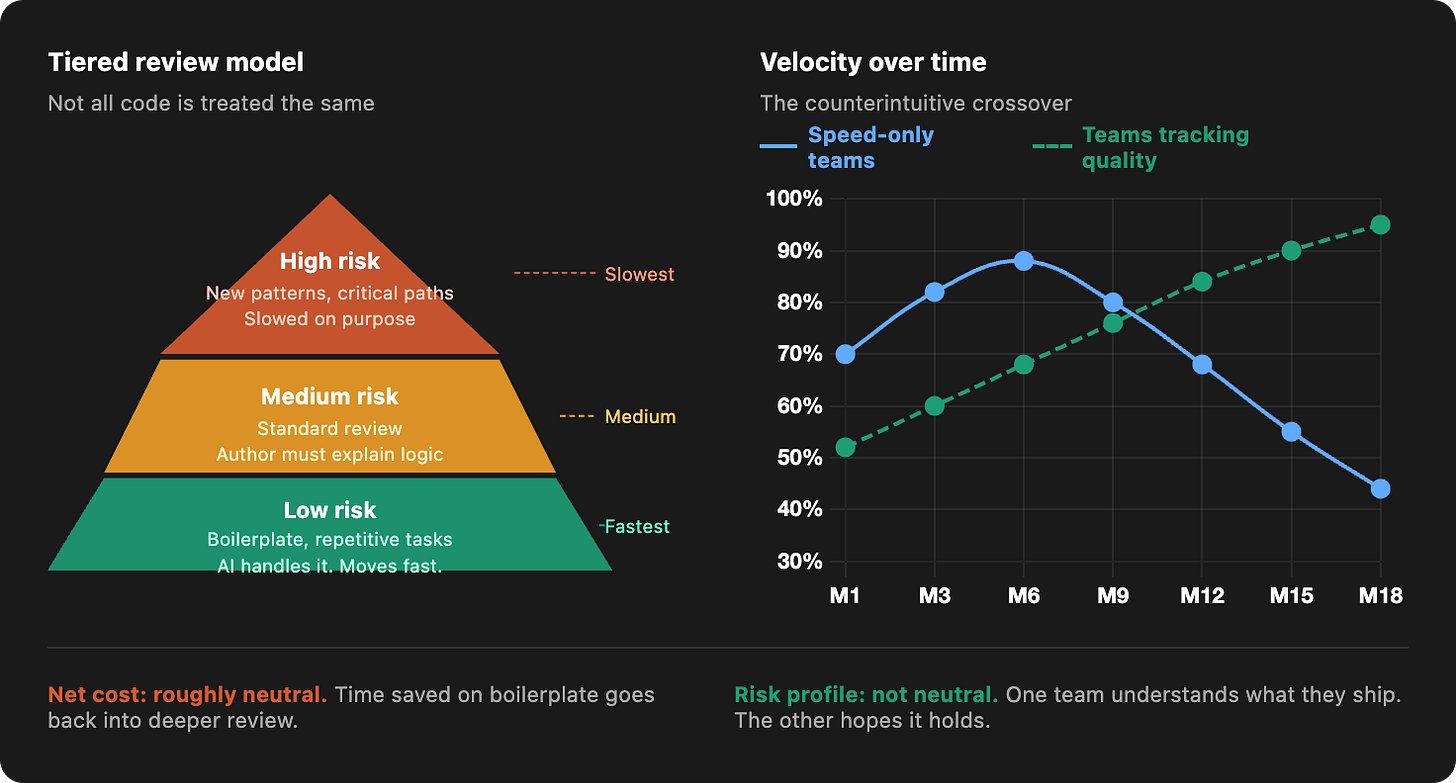

Tiered review, not uniform scrutiny

Review works differently in these teams. Not every change gets treated the same, but the difference is explicit.

Small, low risk changes move quickly.

Larger changes, especially those that introduce new patterns or touch critical paths, are slowed down on purpose.

What matters is not just whether the code runs, but whether the person who wrote it or approved it can explain how it works and why it was structured that way.

That expectation shows up in subtle ways, a pull request is less likely to move forward if the author cannot walk through the logic, even if the tests pass.

And the logic is pretty simple.

AI is great at handling the boring, repetitive stuff.

So let it.

The time you save there goes back into actually understanding the code that matters.

The code that, if it breaks, hurts.

The net cost is roughly neutral. The risk profile is not.

Measuring what actually matters

These teams also watch different numbers.

Velocity exists, but it’s not the only signal that matters.

Code churn, which is how often recently merged code needs to be substantially revised, is a reliable early indicator that comprehension debt is building.

How long it takes to fix a bug in AI generated code tells you a lot.

If it’s taking forever, chances are nobody really understood that code in the first place.

Neither of these metrics is exotic.

Most teams just aren’t tracking them.

The result, counterintuitively, is that these teams end up moving faster over time. Because the system remains understandable, changes carry less hidden risk.

Engineers spend less time rediscovering how things work.

Decisions hold instead of being undone.

6. The Uncomfortable Truth About What This Means for Your Team

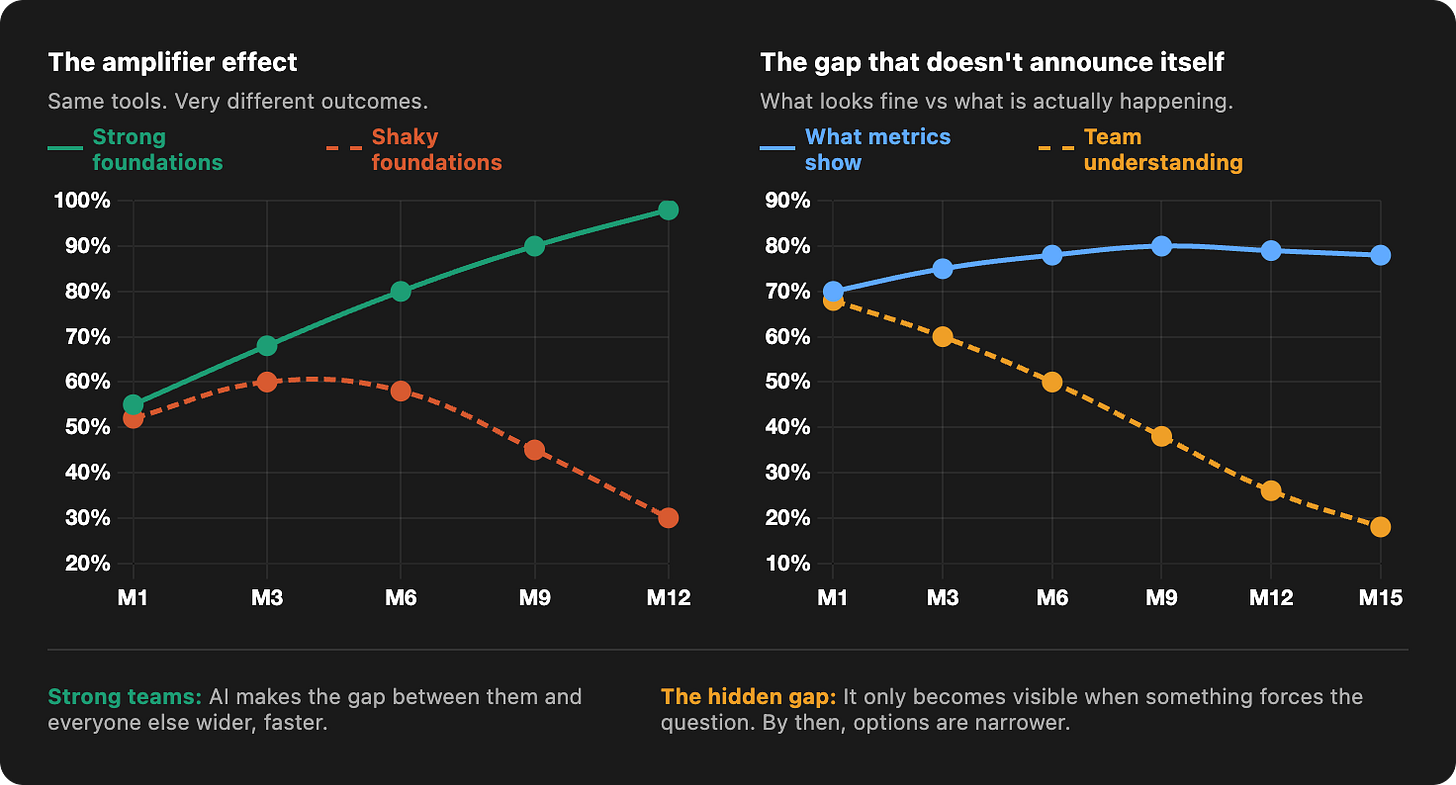

These tools amplify whatever is already there.

Strong foundations, clear ownership, a team that actually understands how the pieces fit together. All of it gets faster and better.

But if those foundations were shaky to begin with, the same acceleration just pushes more code into the places that were already hard to work through.

The gaps don’t stay the same size.

They grow.

Decisions that were slightly unclear become harder to untangle.

Things that could have been sorted out early start to feel permanent.

Judgment matters more, not less

Over time, one thing becomes clear. Your team’s judgment has never mattered more.

Because the leverage on that judgment has never been higher.

Every decision about what to generate, what to accept and what to revisit carries more weight than it used to.

A mistake that once stayed local can now spread through the entire system before anyone realises what happened.

The tools, used carefully, deliver real value.

But the teams that hold onto that value over time are the ones who stay in control of what the code actually means. Not just how much of it exists.

The gap that doesn’t announce itself

For a while, everything still looks like progress.

The codebase grows, features ship, the metrics look fine. But the real gap, how much of the system anyone can actually account for, stays hidden.

It only becomes visible when something forces the question.

By then, the options are already narrower.

The tools did exactly what they were supposed to do. What built up quietly in the background was the cost of treating understanding as something that could always wait.

But it couldn’t.